Overview

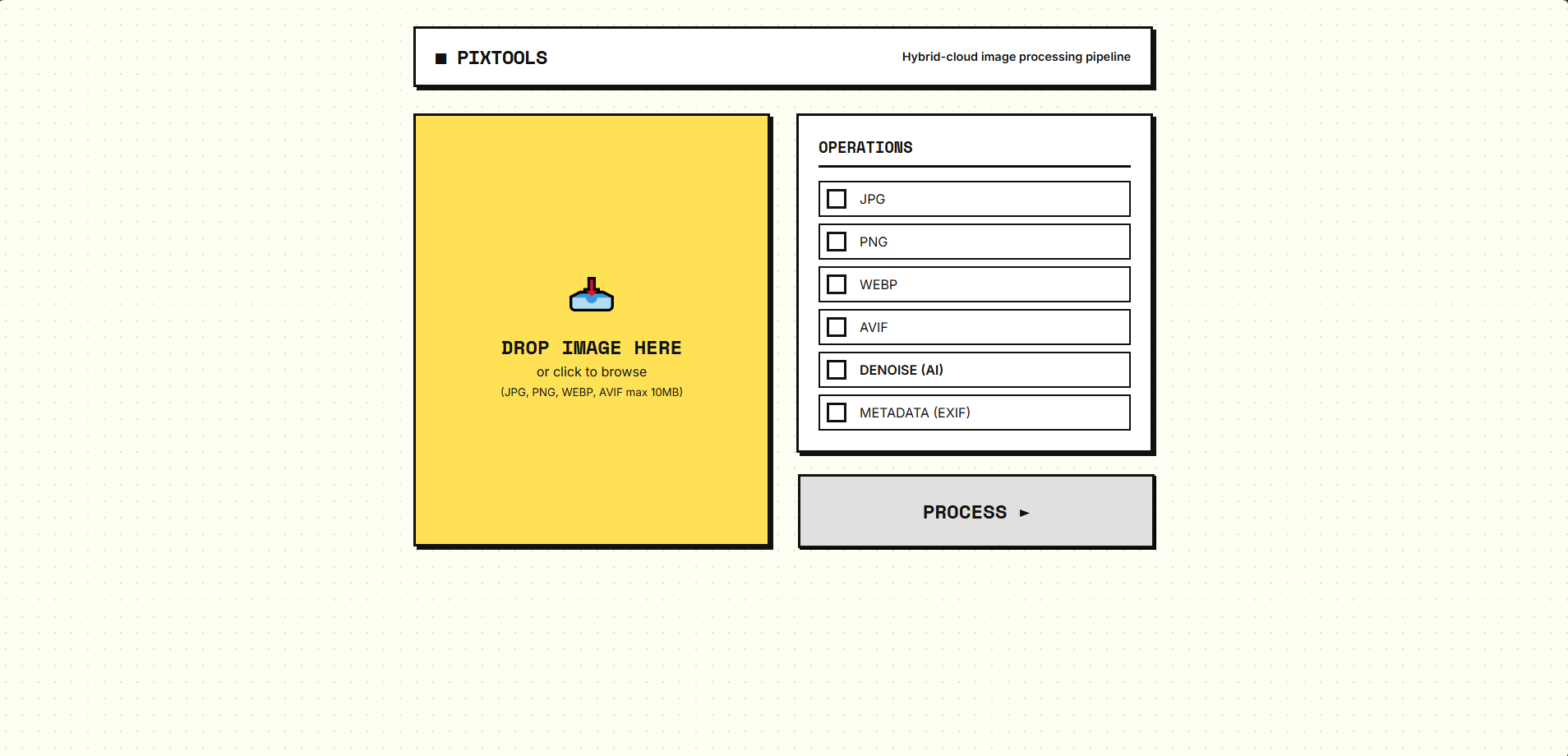

PixTools is a production-grade, distributed asynchronous image-processing system. Users upload images, choose operations (format conversion, DnCNN denoising, EXIF extraction), and receive outputs asynchronously when background processing completes.

The public HTTP edge is served by a Go API that validates requests, manages S3 uploads, writes Postgres job rows, checks Redis idempotency, and publishes Celery-compatible AMQP messages to RabbitMQ. The Python worker layer retains full ownership of pipeline orchestration, image processing, ML inference, metadata extraction, and archive generation.

The Scale-Up Struggle & Engineering Highlights

This system wasn't just deployed; it was architected to survive network drops, EC2 terminations, and infrastructure drift. Here are the real-world engineering challenges solved:

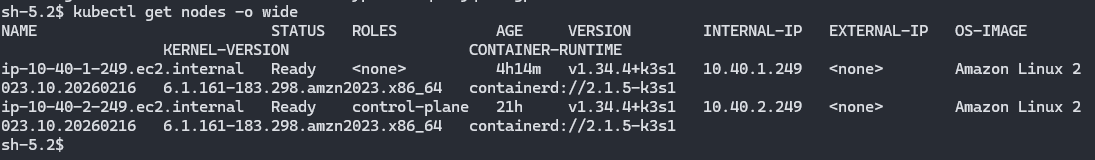

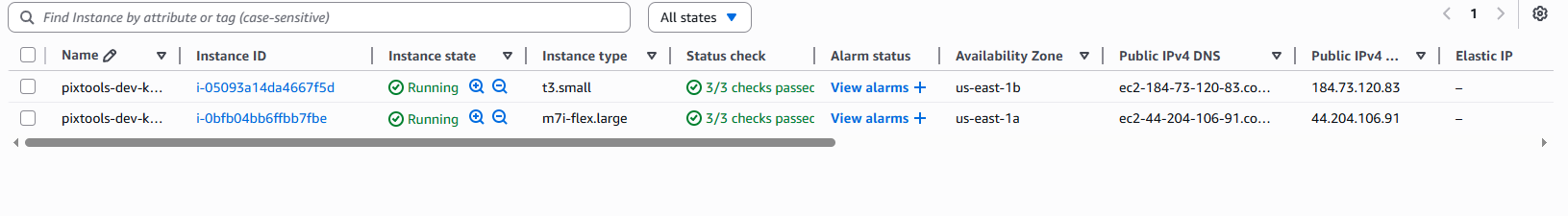

The Problem: The original unified K3s Auto Scaling Group used Spot Instances. When AWS inevitably reclaimed a spot instance under heavy ML load, it took down the control plane, RabbitMQ, and the API simultaneously. Deployments hung trying to resolve "Ghost Nodes".

The Fix: A massive dual-node re-architecture. The control plane, RabbitMQ, and Redis were moved to a stable, on-demand infra node. The API and Celery workers were pushed to a scalable array of Spot flex nodes, saving 70% in cost while ensuring the control plane always survived spot terminations.

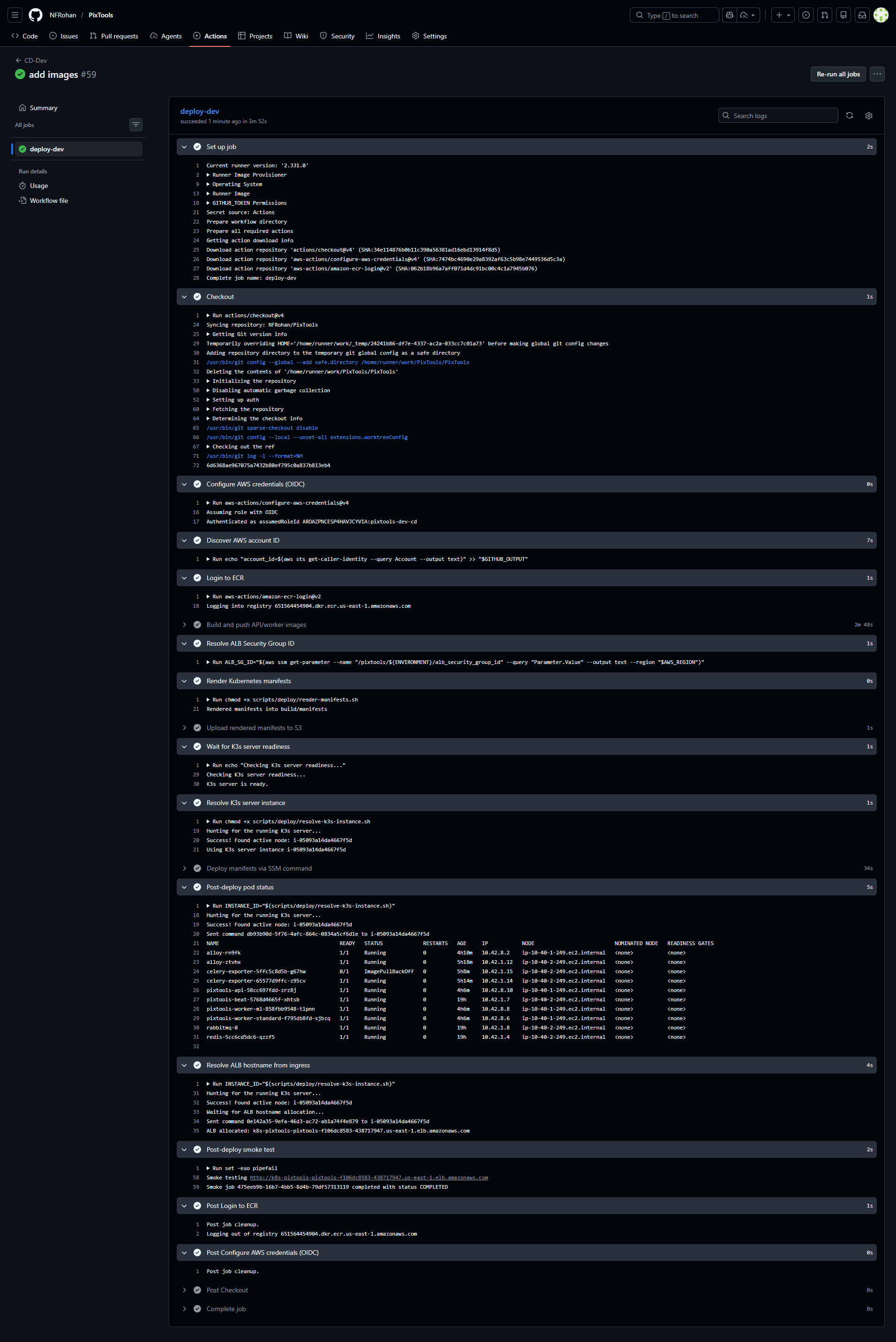

The Problem: Early CI/CD relied on hardcoded GitHub Secrets (e.g., ALB Security Group ID). When Terraform recreated these during architecture shifts, the pipeline natively broke trying to attach to ghost security groups.

The Fix: Fully dynamic state resolution. Terraform provisions and writes state directly into AWS Systems Manager (SSM) Parameter Store. GitHub Actions queries SSM at runtime, eliminating credential drift.

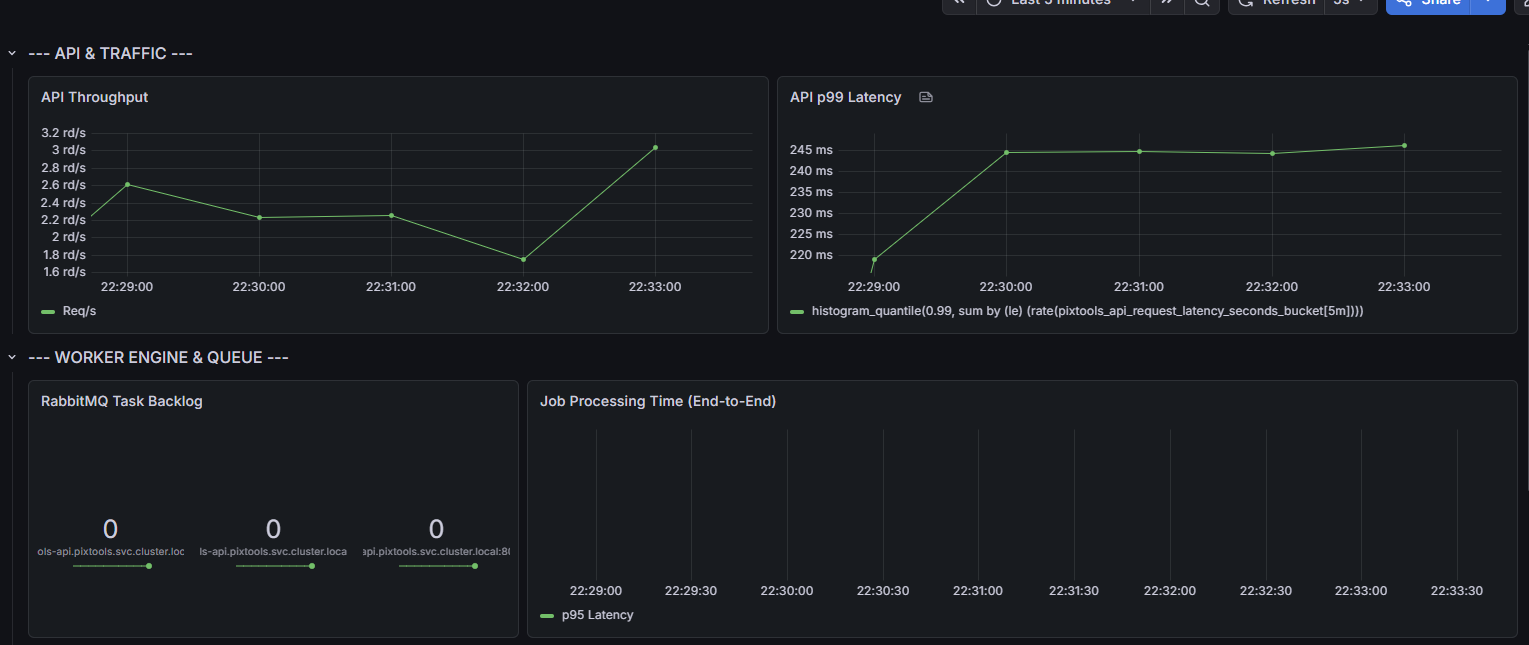

The Problem: Grafana dashboards showed processing times stuck at 0. The

API's /metrics endpoint was scraped perfectly by Alloy, but the API only queued

jobs; the actual Python workers had no embedded HTTP server to expose their metrics.

The Fix: Deployed a dedicated

celery-exporter container bridging worker queues and task completions directly

from RabbitMQ into Prometheus metrics, combined with enabling worker sent events.

The Problem: A hand-rolled bash script trying to kubectl drain

terminating spot instances frequently failed silently due to degraded networking during the

2-minute AWS warning window.

The Fix: Replaced it with the official AWS Node Termination Handler DaemonSet. Now, on a spot interruption notice, it cleanly cordons, taints, and evicts pods directly via the internal host network, guaranteeing zero dropped background jobs.

The Problem: The original FastAPI HTTP layer carried the full Python runtime and memory overhead just to validate requests, upload files to S3, write a job row, and enqueue background work. That was wasteful at the edge.

The Migration: I moved only the HTTP edge to

Go, keeping the Python worker system intact. Go now serves the frontend,

validates POST /api/process, writes the initial Postgres row, checks Redis

idempotency, uploads the raw file to S3, and publishes Celery-compatible AMQP messages to

RabbitMQ. Python still owns pipeline orchestration, image processing, ML inference, metadata

extraction, and archive generation.

The Hard Part: Preserving contract parity with an existing Python worker runtime and Alembic-managed schema. The first migration attempt exposed several real integration failures:

- Schema drift between GORM models and the Alembic-backed

jobstable - Dropped validation rules that the Python API had enforced

- Incorrect RabbitMQ publishing semantics when trying to use

gocelery - Lost request metadata propagation into Celery tasks

- An unregistered router task that caused accepted jobs to sit in

PENDING - Runtime config mismatch from Python's

postgresql+asyncpg://DSN format

The Fix: Treat the migration as a

compatibility problem, not a rewrite fantasy. I kept Alembic as the schema

authority, aligned the Go model with the existing database contract, restored backend

validation parity, removed gocelery, and published Celery-compatible AMQP

envelopes directly to the queue the Python workers already consumed. A dedicated router task

on the Python side lets Go hand off a small, stable payload while the worker layer continues

building the full Celery chord internally.

The Problem: Fixed replica counts meant the system either wasted money at

idle or dropped jobs under burst. Pod-level scaling alone wasn't enough — when all pods

maxed out on one node, new pods just sat Pending.

The Fix: Two-layer autoscaling:

- Pod scaling: API scales via HPA on CPU/memory. Standard workers scale via KEDA on RabbitMQ queue depth — the queue drives the workers, not CPU noise.

- Node scaling: Cluster Autoscaler watches for

unschedulable pods and grows the workload ASG. Required Terraform changes for ASG

autodiscovery tags, IAM permissions, and in-cluster RBAC for

jobs.batch,volumeattachments, andconfigmaps.

Live Proof: A bounded stress run showed workers

scaling 1 → 2 → 3 via KEDA, API scaling 1 → 3 via HPA, and a

controlled unschedulable probe validated full ASG scale-out from 1 → 2 workload

nodes with automatic K3s agent registration.

The Problem: Real autoscaling exposed deploy-path failures: K3s API flaps

during disruptive events, and Helm releases get stuck in pending-upgrade,

poisoning every subsequent deploy.

The Fix: API readiness gating before and after

disruptive steps. Retry wrappers around kubectl apply. Helm busy-release retry

logic with automatic rollback to the last stable revision when a release is stuck pending.

One live KEDA release had to be manually recovered before codifying the recovery path.

The Problem: RabbitMQ on local-path storage was coupled to the

infra node. Any infra-node replacement would strand the broker volume and make rotations

disruptive.

The Fix: Installed the AWS EBS CSI driver, added a

gp3 StorageClass, and moved the RabbitMQ StatefulSet PVC target. Hit two

independent failure modes during migration: Kubernetes rejected the in-place

volumeClaimTemplates patch (immutability), and EBS CSI couldn't create volumes

due to ec2:CreateVolume IAM denials. Fixed by explicit maintenance migration

paths and inline IAM policy expansions.

Production Stress Test Results

A formal in-region (us-east-1) performance suite replaced ad-hoc stress runs.

Temporary EC2 load-generator, orchestrated scenario matrix, pre/post cluster snapshots, and

runtime log collection across all components.

| Scenario | Submitted | HTTP p95 | Fail Rate |

|---|---|---|---|

| Baseline (30 VUs, 10m) | 3,779 | 651ms | 0.00% |

| Spike (120 VUs, 5m) | 6,119 | 8,539ms | 3.65% |

| Retry Storm (60 VUs, 5m) | 6,461 | 3,618ms | 0.22% |

| Starvation Mix (8m) | 5,645 | 6,137ms | 1.33% |

Key findings: Stable and production-acceptable at moderate sustained load.

Under aggressive concurrency, API probe timeouts and app-node CPU saturation (100%) are the

primary degradation drivers. ML queue wait reaches ~2.5h under sustained heavy-job pressure,

confirming the need for dedicated ML capacity. Scale ceilings (max=3) are the

hard bottleneck — raising them is the highest-impact remediation.

Architecture

flowchart TD

subgraph AWS["AWS Cloud"]

ALB[ALB Ingress]

RDS[(PostgreSQL 16\nRDS)]

S3[(S3 Buckets)]

EBS[(EBS gp3 PVC)]

subgraph K3s["K3s Cluster"]

subgraph InfraNode["Infra Node (On-Demand m7i-flex.large)"]

ControlPlane[K3s Control Plane]

RMQ(RabbitMQ)

REDIS(Redis)

BEAT(Celery Beat)

ALBCtrl(ALB Controller)

CEXP(Celery Exporter)

KEDA(KEDA Operator)

end

subgraph WorkloadNode["Workload Nodes (Spot m7i-flex.large, max 3)"]

API(Go API Edge)

W_STD(Celery Worker Standard)

W_ML(Celery Worker ML)

NTH(AWS Node Termination Handler)

end

ALLOY(Alloy DaemonSet)

CA(Cluster Autoscaler)

end

end

GrafanaCloud["☁️ Grafana Cloud (Logs, Metrics, Traces)"]

User[Frontend User] --> ALB

ALB --> API

API -.->|Idempotency/Locking| REDIS

API -.->|Job Tracking| RDS

API -->|Publishes AMQP| RMQ

API -->|Uploads Image| S3

RMQ -->|Consumes| W_STD

RMQ -->|Consumes| W_ML

RMQ -.->|Queue Depth| KEDA

KEDA -.->|Scales| W_STD

W_STD -->|Processes| S3

W_ML -->|Infers| S3

W_STD -.->|Updates Status| RDS

W_ML -.->|Updates Status| RDS

RMQ -.->|State| EBS

BEAT -->|Schedules| RMQ

CEXP -.->|Reads Events| RMQ

ALLOY -->|Scrapes| API

ALLOY -->|Scrapes| CEXP

ALLOY ==>|Ships Telemetry| GrafanaCloud

NTH -.->|Cordons & Drains| ControlPlane

CA -.->|Scales Workload ASG| WorkloadNode

Runtime Topology

- Ingress & API: AWS ALB Controller routing to K3s Pods running the Go HTTP edge. Go handles validation, S3 uploads, Postgres writes, and AMQP publishing.

- Worker Layer: Python Celery workers consume from RabbitMQ, owning all image processing, ML inference, and pipeline orchestration.

- Message Broker: RabbitMQ StatefulSet with Dead Letter Exchanges for failures.

- State & Locking: Redis for transient locks and PostgreSQL 16 on AWS RDS for persistent tracking.

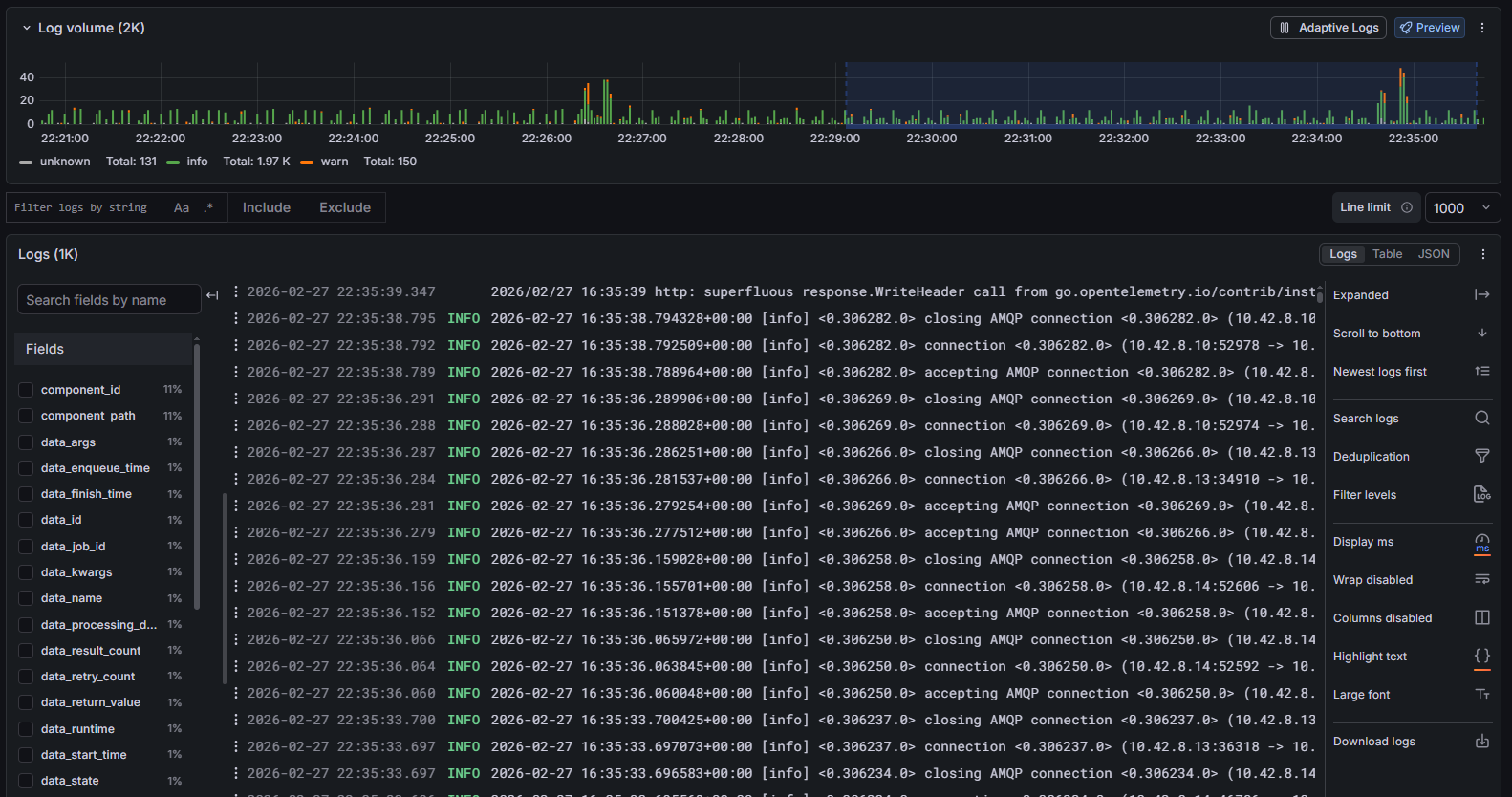

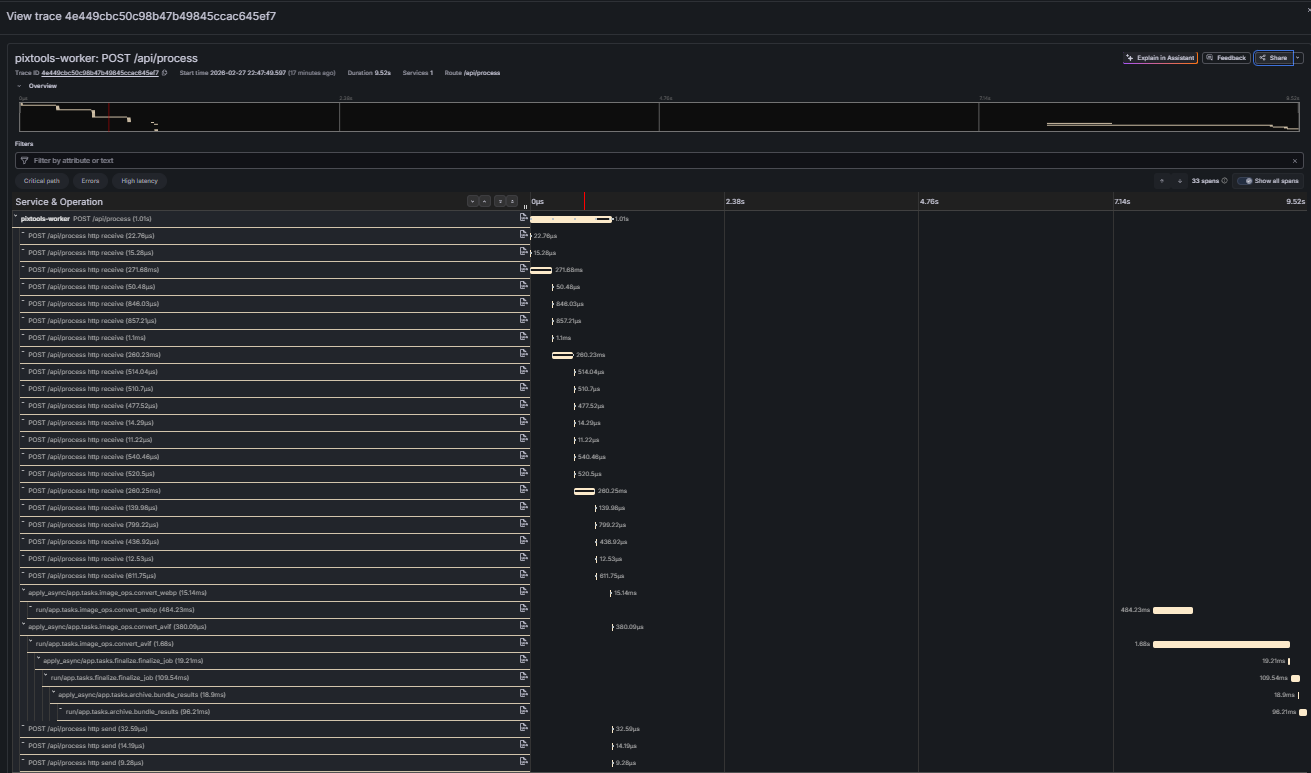

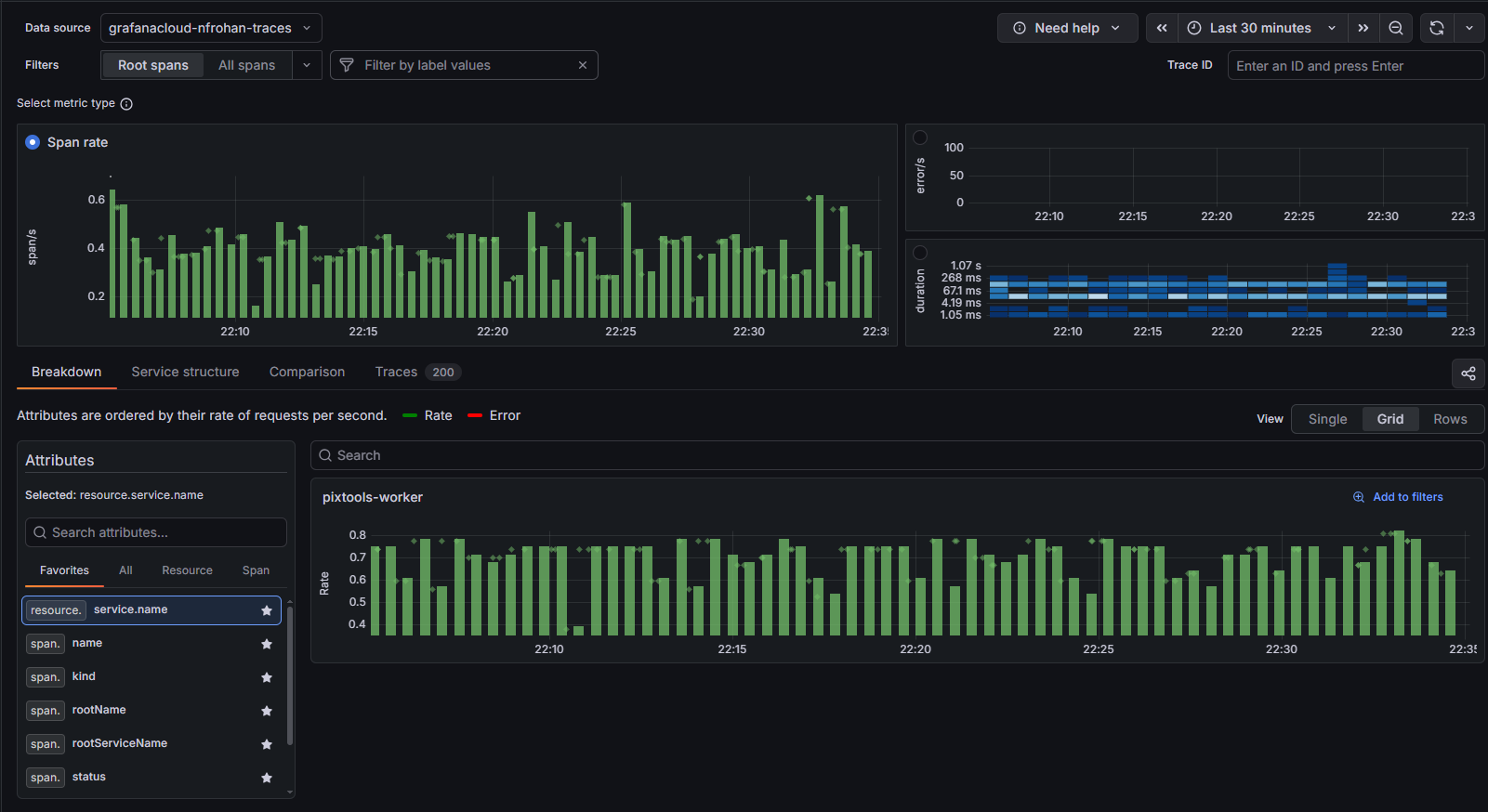

- Data Plane: K3s Agents on scalable

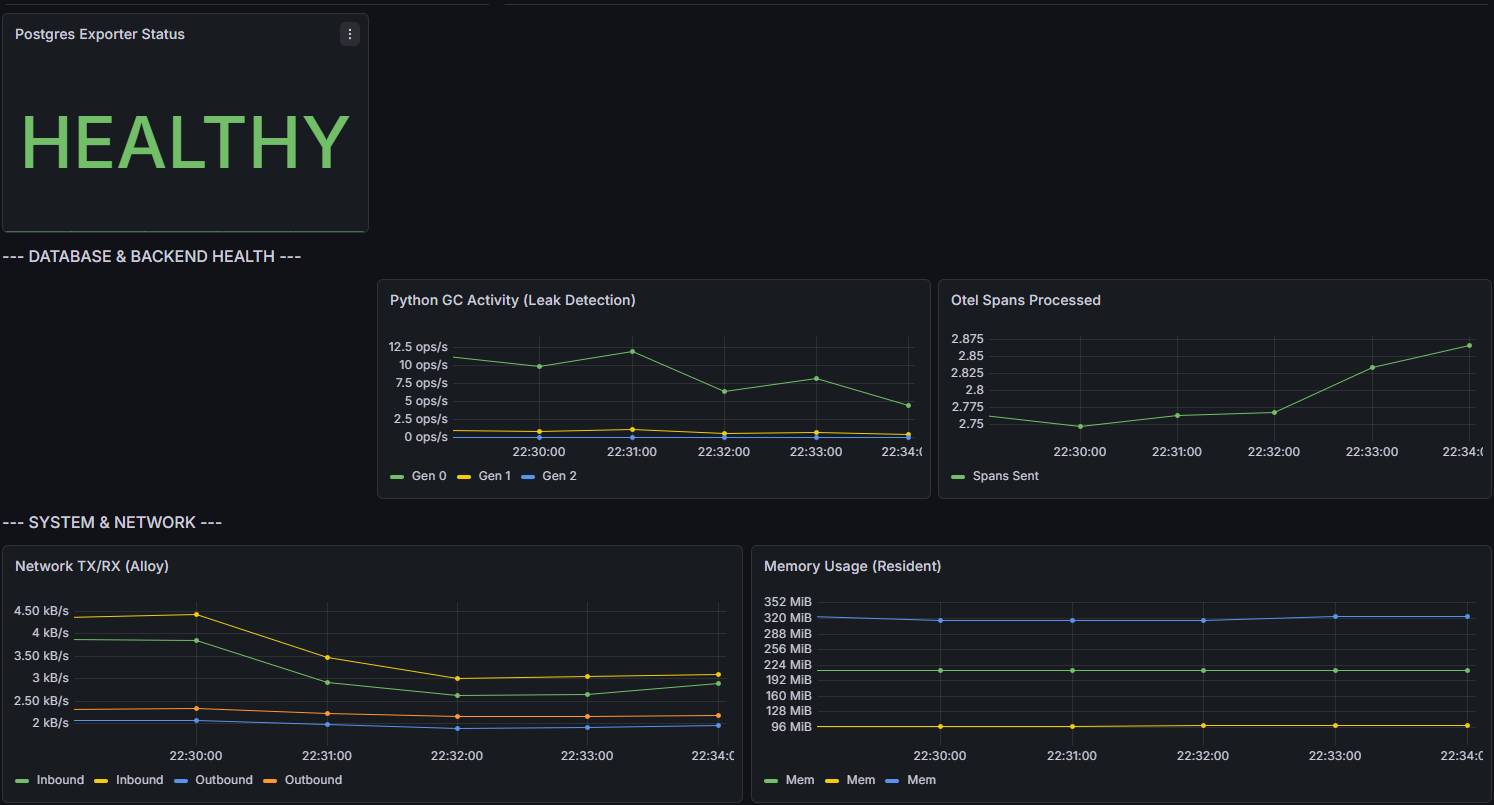

m7i-flex.largespot instances. - Observability: Alloy → Grafana Cloud LGTM. Metrics, Loki logs, and Tempo openTelemetry traces via a single DaemonSet.